Table of Contents

Green?

Fun!

An honorable mention goes to …

| [root@swallowtail ~]# bjobs -u qgu | |||||||

| JOBID | USER | STAT | QUEUE | FROM_HOST | EXEC_HOST | JOB_NAME | SUBMIT_TIME |

| 34729 | qgu | RUN | ehwfd | swallowtail | 6*nfs-2-2 | Happy_New_Year | Dec 25 17:54 |

| 34730 | qgu | RUN | ehw | swallowtail | 6*compute-2-30 | Merry_Christmas | Dec 25 17:58 |

Over the top …

| JOBID | USER | STAT | QUEUE | FROM_HOST | EXEC_HOST | JOB_NAME | SUBMIT_TIME |

|---|---|---|---|---|---|---|---|

| 36865 | qgu | RUN | imw | swallowtail | 8*compute-1-1 | ILoveYou | Jan 18 15:51 |

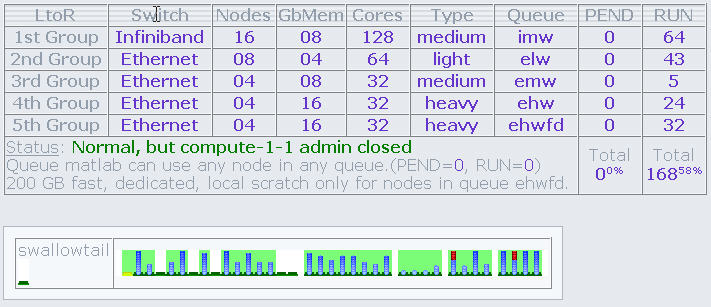

Make Over

A new look for Clumon. Information about primary queues, nodes, memory and total job slots. Alaso listed are number of jobs pending and running (although those stats run about 10 mins late. For up to date stats use bqueues. Move that Bus!

"Best Practices" Workshop

This workshop should give you a good understanding of finding out information from the scheduler as well as provide information to the scheduler. The better the job requirements are matched with the node/queue resources, the more efficient the job runs without wasting resources for others.

We'll experience jobs in pending states for sure. In addition, some users may need to submit hundreds of tiny jobs while others submit few jobs but require lots of resources. So the idea is to walk by some basic understanding of interacting with the scheduler, dig for information, and submit your jobs with ἐνθουσιασμός! Enthousiasmos! You know, gusto!

And ask any and all questions.

Tivoli

Try to not write continually to your output files located in your home directory. Each time Tivoli runs an incremental, it will backup files whose metadata changed (name, size, time stamp). This happens each day/night. In addition, the old active copy now becomes the inactive copy (yesterdays copy) and the old inactive copy gets deleted. That's a lot of work.

It currently is quite taxing the system. For example, this run took 17+ hours.

01/08/08 14:11:53 --- SCHEDULEREC STATUS BEGIN 01/08/08 14:11:53 Total number of objects inspected: 3,904,939 01/08/08 14:11:53 Total number of objects backed up: 1,217 01/08/08 14:11:53 Total number of objects updated: 0 01/08/08 14:11:53 Total number of objects rebound: 0 01/08/08 14:11:53 Total number of objects deleted: 0 01/08/08 14:11:53 Total number of objects expired: 368 01/08/08 14:11:53 Total number of objects failed: 35 01/08/08 14:11:53 Total number of bytes transferred: 105.88 GB 01/08/08 14:11:53 Data transfer time: 970.97 sec 01/08/08 14:11:53 Network data transfer rate: 114,346.59 KB/sec 01/08/08 14:11:53 Aggregate data transfer rate: 1,776.46 KB/sec 01/08/08 14:11:53 Objects compressed by: 69% 01/08/08 14:11:53 Elapsed processing time: 17:21:39 01/08/08 14:11:53 --- SCHEDULEREC STATUS END

For example, not to pick on any particular user, lets look at this. Here is a 1 MB file that continuously changes.

01/02/08 12:17:09 Normal File--> 965,327 /tivoli/home/rusers/chsu/grand-canonical/t0.079-smaller/mu0.32-3/t0.079mu0.32.dat Changed 01/03/08 13:34:46 Normal File--> 1,051,686 /tivoli/home/rusers/chsu/grand-canonical/t0.079-smaller/mu0.32-3/t0.079mu0.32.dat Changed 01/04/08 19:42:46 Normal File--> 1,145,048 /tivoli/home/rusers/chsu/grand-canonical/t0.079-smaller/mu0.32-3/t0.079mu0.32.dat Changed 01/05/08 17:27:15 Normal File--> 1,216,967 /tivoli/home/rusers/chsu/grand-canonical/t0.079-smaller/mu0.32-3/t0.079mu0.32.dat Changed 01/06/08 14:55:17 Normal File--> 1,287,186 /tivoli/home/rusers/chsu/grand-canonical/t0.079-smaller/mu0.32-3/t0.079mu0.32.dat Changed

However, there may be reasons why you need to write continuously to files in your home directory. This may have to do with the ability to restart your job if it crashes or is terminated for another reason. You could write these “restart” files inside your job working directory local to the compute nodes, and have your script copy it back to your home directory. But what if the cluster or the scheduler crashes? Now you may have lost months of compute time.

So, to alleviate the Tivoli backup system a bit i've introduced a directive in it's configuration to specifically skip, <hi #ffc0cb>that is not backup, any directories with the name “nobackup”</hi>. You could now write your data files there, and when the job finishes normally you delete those files. If your job crashes for some reason, you can pick up your “restart” files there.

The files in the ~/nobackup directory will be backed up via the filer snapshotting; once daily, once nightly and once weekly. But these snapshot copies on the filer take much less disk space and are incremental backups of changed blocks only, not multiple copies of an entire file.

<hi #ffff00>Try to place files that change continuously in directories named “nobackup”.</hi>

Then move the final version of those files to their destination.

Or use /sanscratch, but beware, there is no filer snapshotting activities covering /sanscratch.

Filesystem Tips

df -h .

Keep track of that.

Users' home directories are spread across a dozen or so LUNs: logical unit number. Each of size 1 TB, however “thin provisioned”“ on the NetApp storage device. On that device, all home directories are inside a 4 TB volume. So a LUN can only grow to 1 TB if that space is actually available.

On occasion, issue the df command inside your home directory. If a LUN fills up it will automatically offline itself. When that happens all user jobs running against that LUN will also crash. Which was one of the reasons for using many LUNs.

There are currently no file system quotas in place. We are relying on savvy users with some sense of house keeping duties.

[root@swallowtail log]# cd ~qgu

[root@swallowtail qgu]# df -h .

Filesystem Size Used Avail Use% Mounted on

/state/partition1/home/rusers2/qgu

1008G 270G 688G 29% /home/qgu

[root@swallowtail qgu]# cd /state/partition1/home/rusers2

[root@swallowtail rusers2]# ls

adavis02 aminei dbblum ebarnes gpetersson jbodyfelt lost+found mlee03 vclapa yminami

adezieck bkormos dfrohman gng imukerji jknee LUN:rusers2 qgu wpringle ztan

Scratch

- /sanscratch vs /localscratch

As detailed in numerous pages, /sanscratch is a 1 TB file system which is shared by all nodes and visible on the head node. /localscratch is much smaller and roughly a 70 GB file system local to each node. Except for the nfs-2-? nodes comprising the ehwfd queue. These nodes have roughly 200 GB of /localscratch available (made up by seven 15,000 rpm 36 GB disks, striped, not mirrored, with raid 0).

If you wish to be able to observe your job progress then use the $MYSANSCRATCH location as your working directory. You can then find the location of your files in /sanscratch/JOBID on the head node.

You may wish to reserve scratch space for your job. Use the #BSUB -R “rusage[name=value]” syntax where name is either localscratch or sanscratch and value is the size of scratch area needed in MB.

Be careful reserving disk space in /sanscratch. It could affect many other jobs.

Queue Policies

[root@swallowtail configdir]# bqueues -l | egrep -i "^queue|policies" QUEUE: elw SCHEDULING POLICIES: FAIRSHARE BACKFILL EXCLUSIVE NO_INTERACTIVE QUEUE: emw SCHEDULING POLICIES: FAIRSHARE BACKFILL EXCLUSIVE NO_INTERACTIVE QUEUE: ehw SCHEDULING POLICIES: FAIRSHARE BACKFILL EXCLUSIVE NO_INTERACTIVE QUEUE: ehwfd SCHEDULING POLICIES: FAIRSHARE BACKFILL EXCLUSIVE NO_INTERACTIVE QUEUE: imw SCHEDULING POLICIES: FAIRSHARE BACKFILL EXCLUSIVE NO_INTERACTIVE QUEUE: matlab -- Max jobs limited by licensed workers. Scheduling Policies: FIRST-COME-FIRST-SERVED

- FCFS: Considers jobs for dispatch in the same order as they appear in the queue (which is not necessarily the order in which they are submitted to the queue). Evaluated at each job scheduling interval (default is 30 seconds).

- Fairshare: Divides the processing power of the cluster among users and queues to provide fair access to resources; so that no user or queue can monopolize the resources of the cluster and no queue will be starved. It does not need to be equal share; can be user based or queue based.

- Exclusive: Dispatches the job to a host that has no other jobs running, and does not place any more jobs on the host until the exclusive job is finished. (Hint: no need to reserve any other resources when invoked!).

- Backfill: Allows other jobs to use reserved job slots, as long as the other jobs will not delay the start of another job. Backfilling, together with job slot reservation, allows large parallel jobs to run while not underutilizing resources. Jobs with short run limits have more chance of being scheduled as backfill jobs.

These are the current polices were are playing with. Not any real impact right now but as we get more and more knowledgeable about job submissions, and increase volume, the scheduler may start to schedule jobs differently.

Here is a rough synopsis.

Queue matlab is FCFS. All other queues have a variety of policies. The overarching policy is fairshare. Each user has a default share of '1'. This number is calculated at each scheduling interval. If a user has been able to submit jobs to this queue, and has many pending, the share value will decrease. Others who have no jobs running and jobs pending will increase in share value. The share value then determines job scheduling priority. Fairshare only applies in an environment when there is a resource contention; meaning jobs are pending.

The policies of Backfill and Exclusive are fairly straight forward. More details about these policies are mentioned below in context of job submissions.

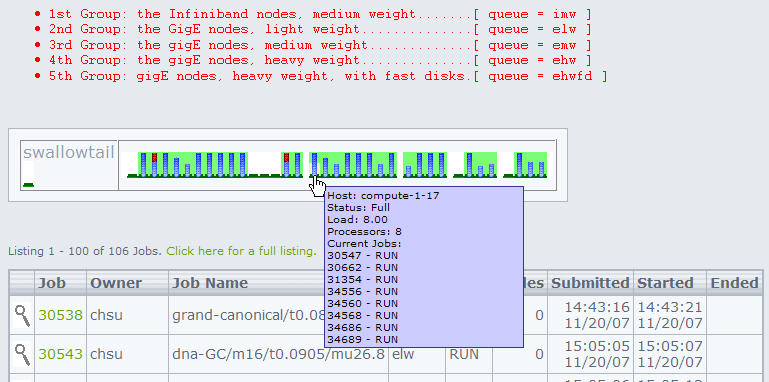

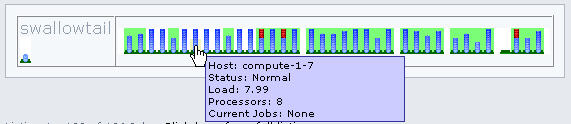

Clumon

- not reliable information

The installed Clumon application is beta quality. It has a list of known bugs which involve: incorrect data for “nodes” the job runs on, incomplete data for the jobs page, and no data for the queues page. Basically don't trust the data presented but verify with command line tools. A newer version will be installed in the future.

However, Clumon has some nice features (when it works!).

- it shows the JOBID running on each node

- it shows idle nodes

- it shows busy nodes (but not the availability of job slots!)

- it shows nodes in groups presenting the queue memberships

- it does show zombies (nodes that are busy but without any jobs runnning)

The command line tools listed below will provide you with similar information. We'll use them in the How To section below. Read the manual page for each of these tools (man tool_name).

lsloadbqueuesbhostsbjobsbhistbrun

How To?

- Find available job slots?

First, the quick way. Run bqueues and compare MAX with NJOBS to assess the availability of job slots on a queue basis.

[root@swallowtail configdir]# bqueues QUEUE_NAME PRIO STATUS MAX JL/U JL/P JL/H NJOBS PEND RUN SUSP elw 50 Open:Active 64 - - - 42 0 42 0 emw 50 Open:Active 32 - - - 10 0 10 0 ehw 50 Open:Active 32 - - - 18 8 10 0 ehwfd 50 Open:Active 32 - - - 16 0 16 0 imw 50 Open:Active 128 - - - 60 0 60 0 matlab 50 Open:Active 8 8 - 8 0 0 0 0

On a per host basis you can run bjobs.

[root@swallowtail ~]# bjobs -m nfs-2-3 -u all JOBID USER STAT QUEUE FROM_HOST EXEC_HOST JOB_NAME SUBMIT_TIME 31349 chsu RUN ehwfd swallowtail nfs-2-3 dna-GC/m16/t0.0900/mu28.0 Nov 21 22:46 31356 chsu RUN ehwfd swallowtail nfs-2-3 dna-GC/m16/t0.0890/mu30.0 Nov 21 22:46 31362 chsu RUN ehwfd swallowtail nfs-2-3 dna-GC/m16/t0.0885/mu30.8 Nov 21 22:47 33170 adezieck RUN ehwfd swallowtail 2*nfs-2-3 run101 Dec 10 13:56

A total of 5, so 3 left. That implies, given resources are available, you could submit jobs on this host.

[root@swallowtail ~]# lsload nfs-2-3 HOST_NAME status r15s r1m r15m ut pg io ls it tmp swp mem gm_ports localscratch sanscratch nfs-2-3 ok 4.0 5.0 4.9 62% 55.4 4424 1 3282 7108M 3916M 13G - 2e+05 924278

Tons of memory available, localscratch empty, seems like a good host to submit jobs too.

Another way to quickly observe available job slots is with bhosts output. Note the completely idle nodes with NJOBS = 0. So if you now have a non-parallel program needing one job slot, try to fill up a host with NJOBS = 7. If you have a Gaussian job with %Nprocs = 3, look for a host to fill with NJOBS = 5. Try to fill up nodes so that NJOBS = 8 and the host is closed for any further job submissions. You also need to look at the resources available to make sure your job, or the other jobs, will not crash due to insufficient resources available.

[root@swallowtail ~]# bhosts | grep -v closed HOST_NAME STATUS JL/U MAX NJOBS RUN SSUSP USUSP RSV compute-1-1 ok - 8 0 0 0 0 0 compute-1-13 ok - 8 4 4 0 0 0 compute-1-25 ok - 8 3 3 0 0 0 compute-1-5 ok - 8 0 0 0 0 0 compute-2-28 ok - 8 6 6 0 0 0 compute-2-31 ok - 8 3 3 0 0 0 nfs-2-2 ok - 8 2 2 0 0 0 nfs-2-3 ok - 8 5 5 0 0 0

- Number of slots to use?

So in a typical parallel job submission you may have a line like #BSUB -n 64 requesting 64 jobs slots for your program. That may involve a long waiting time as the scheduler waits until that many cores become available. The waiting time is also influenced by queue policies.

One way of avoiding this, is to instruct the scheduler “i'd prefer 64, but if any number of slots are available greater than 32, go ahead and run the program”. The syntax for that is …

#BSUB -n 32,64

- Slot Reservation for parallel jobs?

[root@swallowtail ~]# bqueues -l imw ... Maximum slot reservation time: 86400 seconds

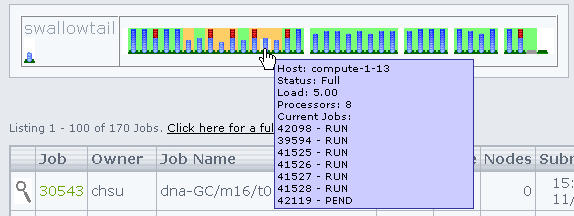

Clumon looks like this (change in background color; pink if not full, orange if full)

Parallel jobs using the imw queue often have to wait until large number of job slots become available. The scheduler manages slot reservations applying the following logic: If slots are available, jobs may reserve the necessary slots for job execution. Meaning hold on to them. If within the time specified (86,400 seconds equals 24 hours) the required number of slots is collected the job starts. If not, the reserved slots are returned to the pool of available job slots.

Backfill scheduling allows other jobs to use these reserved job slots, as long as these jobs will not delay the start of the job who reserved the slot. This will only work if both the big parallel job and smaller jobs wanting to use the reserved slots specify a run time with bsub -W [time] ….

- Resource Reservation?

This is a fairly large topic and i'm going to point you to the manual page. It is very flexible and can even handle ramp-up and ramp-down requests for resources. Once you reserve resources your job will start and be assured these resources are available. Hence not crash or slow way down because another job suddenly gobbled up all disk space or memory.

You are strongly encouraged to use resource reservation with bsub -R rusage[name1=value1, name2=value2, …] ….

You can use the blimits tool to query limits imposed on running jobs. These can be set by user, by queue, by hosts. We currently don't have any! ![]()

Here is the Manual Page on Resource Reservation

- Exclusive use?

Exclusive use of a particular node is currently activated. Use sparingly! It would be much preferable for you to run some test jobs and find out what resources you actually need, rather than claim everything.

There are several ways to obtain exclusive use of a node.

The ugly, brute force way: bsub -x …, no other jobs will be submitted on this host and your job is the only job allocated to this host.

The gentle, brute force way: bsub -n 8 …, if you request 8 slots on the same host, well, that's exclusive use too. This is appropriate in Gaussian since this application forks itself so you want all the processes on the same host sharing the same data & code stack.

The hog way: bsub -R “rusage[mem=NEARMAX]” …, where NEARMAX is just below the total memory of a node. Once that block is reserved other jobs not specifying memory needs will crash, those that do, will never run on that host.

The parallel hog way: bsub -R “span[hosts=1]” … indicates that all the processors allocated to this job must be on the same host, which may be appropriate, as opposed to “span[ptile=value]” where value is less than 8 indicating the number of slots to use on each host that should be allocated to this job.

- Force execution?

So you have a job pending? Command bqueues reveals 5 pending jobs jobs for the queue elw. However the command bhosts reveals available job slots and even idle hosts.

[root@swallowtail ~]# bqueues QUEUE_NAME PRIO STATUS MAX JL/U JL/P JL/H NJOBS PEND RUN SUSP elw 50 Open:Active - - - - 69 5 64 0 emw 50 Open:Active - - - - 21 0 21 0 ehw 50 Open:Active - - - - 27 0 27 0 ehwfd 50 Open:Active - - - - 22 0 22 0 imw 50 Open:Active - - - - 108 0 108 0 matlab 50 Open:Active 8 8 - 8 0 0 0 0

[root@swallowtail ~]# bhosts | grep -v closed HOST_NAME STATUS JL/U MAX NJOBS RUN SSUSP USUSP RSV compute-1-1 ok - 8 0 0 0 0 0 compute-1-13 ok - 8 4 4 0 0 0 compute-1-25 ok - 8 3 3 0 0 0 compute-1-26 ok - 8 7 7 0 0 0 compute-1-27 ok - 8 5 5 0 0 0 compute-1-5 ok - 8 0 0 0 0 0 compute-2-28 ok - 8 6 6 0 0 0 compute-2-31 ok - 8 3 3 0 0 0 nfs-2-2 ok - 8 2 2 0 0 0 nfs-2-3 ok - 8 5 5 0 0 0 nfs-2-4 ok - 8 7 7 0 0 0

So next we could look for the reason why these jobs are pending. The pending reasons given are somewhat lucid, but we quickly observe the queue is full (8 hosts reached their job slot limit, and 8 hosts are in this queue).

[root@swallowtail ~]# bjobs -p -u all JOBID USER STAT QUEUE FROM_HOST EXEC_HOST JOB_NAME SUBMIT_TIME 35040 gng PEND elw swallowtail - o2048L410.bat Dec 28 15:30 Not specified in job submission: 29 hosts; Job slot limit reached: 8 hosts; 35041 gng PEND elw swallowtail - o2048L512.bat Dec 28 15:30 Not specified in job submission: 29 hosts; Job slot limit reached: 8 hosts; 35042 gng PEND elw swallowtail - o2048L640.bat Dec 28 15:30 Not specified in job submission: 29 hosts; Job slot limit reached: 8 hosts; 35043 gng PEND elw swallowtail - o2048L768.bat Dec 28 15:30 Not specified in job submission: 29 hosts; Job slot limit reached: 8 hosts; 35044 gng PEND elw swallowtail - o2048L896.bat Dec 28 15:30 Not specified in job submission: 29 hosts; Job slot limit reached: 8 hosts;

Based on the bhosts information listed above, we could now force our job to run on available nodes. In this case i'm assuming we have small memory requirements and none other, so any host will do. But i'm specifically looking at filling up hosts job slot wise. Other users may have requirements for 4-8 slots on a single host.

[root@swallowtail ~]# brun -f -m compute-1-27 35041 Job <35041> is being forced to run. [root@swallowtail ~]# brun -f -m compute-1-27 35042 Job <35042> is being forced to run. [root@swallowtail ~]# brun -f -m compute-1-27 35043 Job <35043> is being forced to run. [root@swallowtail ~]# brun -f -m nfs-2-4 35044 Job <35044> is being forced to run. [root@swallowtail ~]# brun -f -m compute-1-26 35040 Job <35040> is being forced to run.

So the idea was to fill up host compute-1-27 with another3 jobs, and then fill up hosts nfs-2-4 and compute-1-26 each with another job.

~/.lsbatch

When you submit jobs, files are created in your ~/.lsbatch directory. That's a “hidden” directory, notice the leading dot. Basically the scheduler copies your submission info into files. It also keeps track of standard output & error and collects it in these files.

This happens for all users. That's also the reason you should avoid writing lots of information to standard output. It will put a load on the ionode processing all those reads/writes.

But the information in these files can be useful, especially for debugging. Here is a sample.

<hi #ffff00>Please note that any ~/.lsbatch location is skipped by Tivoli Backup Policy</hi>

[hmeij@swallowtail test]$ bsub < run [hmeij@swallowtail test]$ bjobs JOBID USER STAT QUEUE FROM_HOST EXEC_HOST JOB_NAME SUBMIT_TIME 35376 hmeij RUN imw swallowtail 2*compute-1-15:2*compute-1-11: 2*compute-1-16:2*compute-1-10:2*compute-1-14:2*compute-1-8:2*compute-1-13: 2*compute-1-2 test Jan 2 15:02 [hmeij@swallowtail test]$ ll ~/.lsbatch/ total 24 -rwx------ 1 hmeij its 2318 Jan 2 15:02 1199304166.35376 -rw------- 1 hmeij its 0 Jan 2 15:02 1199304166.35376.err -rw------- 1 hmeij its 31 Jan 2 15:02 1199304166.35376.out -rwxr--r-- 1 hmeij its 1979 Jan 2 15:02 1199304166.35376.shell -rw-r--r-- 1 hmeij its 69 Jan 2 14:27 mpi_args -rw-r--r-- 1 hmeij its 204 Jan 2 14:27 mpi_machines -rw-r--r-- 1 hmeij its 204 Jan 2 14:27 mpi_machines.lst [hmeij@swallowtail test]$ cat ~/.lsbatch/1199304166.35376.out Running on IB-enabled node set [hmeij@swallowtail test]$ cat ~/.lsbatch/mpi_args -np 16 /share/apps/amber/9openmpi/exe/sander.MPI -O -i mdin -o mdout [hmeij@swallowtail test]$ cat ~/.lsbatch/mpi_machines.lst compute-1-7 compute-1-7 compute-1-15 compute-1-15 compute-1-13 compute-1-13 compute-1-14 compute-1-14 compute-1-16 compute-1-16 compute-1-10 compute-1-10 compute-1-11 compute-1-11 compute-1-2 compute-1-2

MPI

Those of you running programs compiled with mpicc or mpif90, iow using MPI, should change the references to the “wrapper” scripts. Below are listed the names of the new wrapper scripts. You may also wish to read MPI & LSF

- change the references to the old lava wrapper scripts to the new lsf wrapper scripts:

/share/apps/bin/lsf.topspin.wrapper/share/apps/bin/lsf.openmpi.wrapper/share/apps/bin/lsf.openmpi_intel.wrapper

- you do not have to specify the

-noption anymore when invoking your application, the BSUB line is enough#BSUB -n 4

Q&A

- Can i receive email notification when my job is done?

This can easily be answered by looking at the manual page for bsub. You can omit directives to write standard output and standard error to disk and they will be mailed. But they may be large and it is convenient to have them on disk. What you need to specify is:

#BSUB -u username

Note: the -u option appears broken, or it does not do what i think it does. Will investigate. — Meij, Henk 2008/01/17 11:37

But an option that work with the -o, -e and -J options is

#BSUB -N

It will send you email when the job is done.

- What jobs recently finished?

Use the -d option of command bjobs. Reporting within is within last hour.

[root@swallowtail configdir]# bjobs -d -u all JOBID USER STAT QUEUE FROM_HOST EXEC_HOST JOB_NAME SUBMIT_TIME 35397 bstewart EXIT emw swallowtail compute-1-26 Molscat36 Jan 3 10:42 35266 qgu DONE imw swallowtail 8*compute-1-1 Gaussian Jan 2 12:41 35537 hmeij EXIT imw swallowtail 2*compute-1-13 mpi.lsf Jan 3 15:55 35538 hmeij EXIT imw swallowtail 2*compute-1-8 mpi.lsf Jan 3 16:01

- …