This is an old revision of the document!

Table of Contents

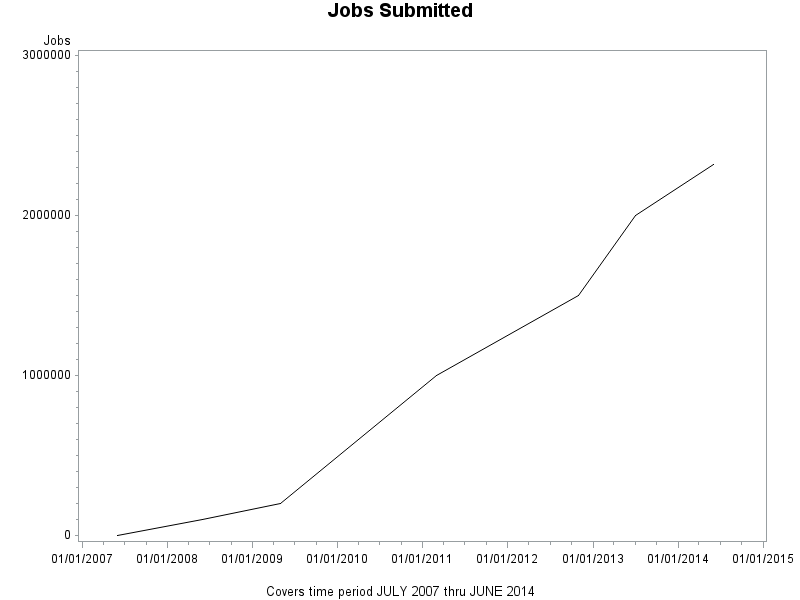

Total Jobs Submitted

Just because I keep track

06/01/2007,total,0,0, 06/01/2008,total,0,100000, 05/01/2009,total,0,200000, 03/01/2011,total,0,1000000, 11/01/2012,total,0,1500000, 07/01/2013,total,0,2000000, 06/01/2014,total,0,2320000,

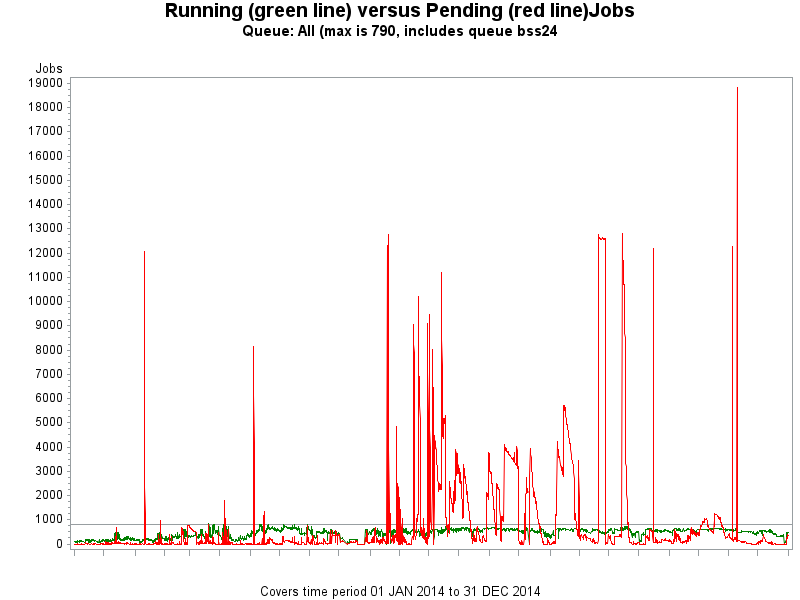

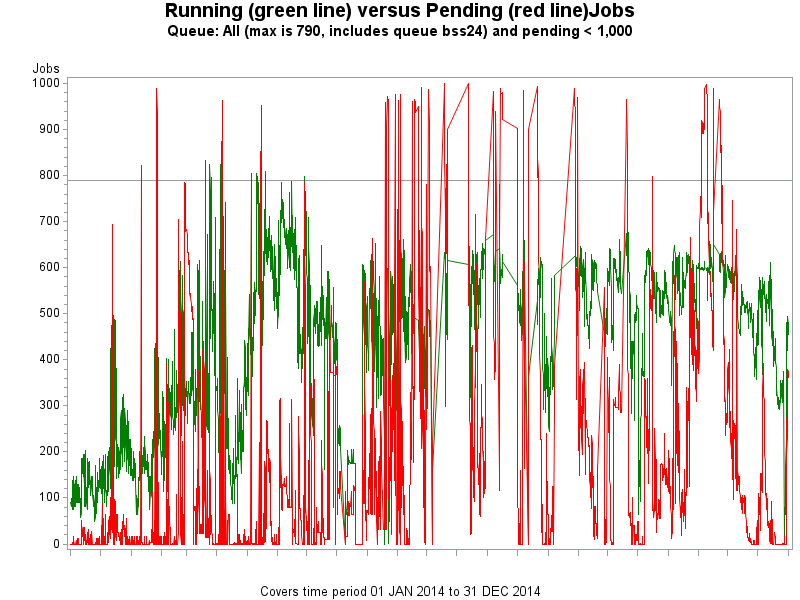

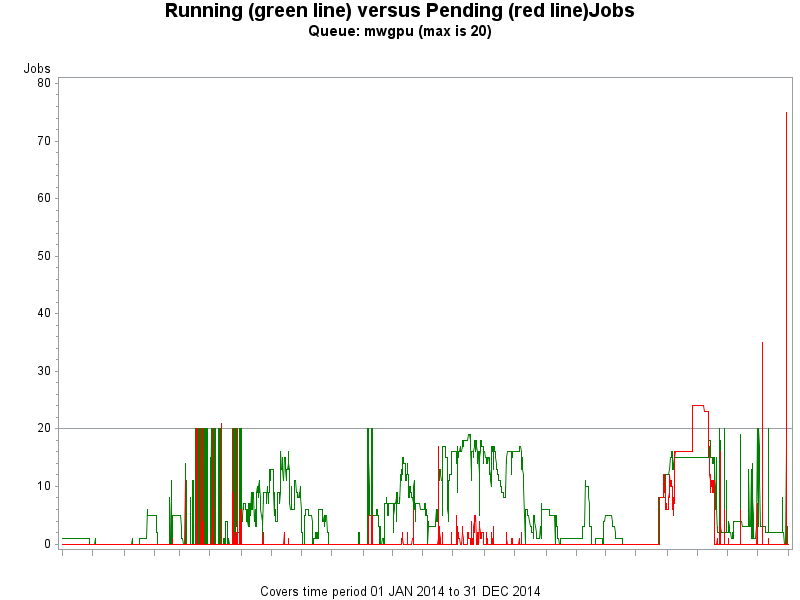

2014 Queue Usage

For other years view: 2013 Queue Usage, 2012 Queue Usage, 2011 Queue Usage …

Accounts

- Users

- About 200 user accounts have been created since early 2007.

- At any point in time there may be 12-18 users active, it rotates in cycles of activity.

- There are 22 permanent collaborator accounts (faculty/researchers at other institutions).

- There are 100 generic accounts for class room use (recycled per semester).

- Perhaps 1-2 classes per semester use the HPCC facilities

- Depts

- ASTRO, BIOL, CHEM, ECON, MATH/CS, PHYS, PSYC, QAC, SOC

- Most active are CHEM and PHYS

Hardware

This is a brief summary description of current configuration. A more detailed version can be found in the Brief Guide to HPCC write up.

- 52 old nodes (Blue Sky Studio donation, circa 2002) provide access to 104 job slots and 1.2 TB of memory.

- 32 new nodes (HP blades, 2010) provide access to 256 job slots and 384 GB of memory.

- 13 newer nodes (Microway/ASUS, 2013) provide access to 396 job slots and 3.3 TB of memory.

- 5 Microway/ASUS nodes provide access to 20 K20 GPUs for a total of 40,000 cores and 160 GB of memory.

- Total computational capacity is near 30.5 Teraflops (million million instructions per second).

- The GPUs account for 23.40 Teraflops of that total (double precision, single precision is in the 100+ range).

- All nodes (except the old nodes) are connected to QDR Infiniband interconnects (switches)

- This provides a high throughput/low latency environment for fast traffic.

- /home is served over this environment using IPoIB for fast access.

- All nodes are also connected to gigabit standard ethernet switches.

- The Openlava scheduler using this environment for job dispatching and monitoring.

- The entire environment is monitored with custom scripts and ZenOSS

- Two 48 TB disk arrays are present in the HPCC environment, carved up roughly

- 10 TB each sharptail:/home and greentail:/oldhome (latter for disk2disk backup)

- There is no “off site” backup destination

- 5 TB each sharptail:/sanscratch and greentail:/sanscratch (scratch space for nodes n33-n45, n1-n32 & b0-b51, respectively)

- 15 TB sharptail:/snapshots (for daily, weekly and monthly backups)

- 15 TB greentail:/oldsnapshots to be reallocated (currently used for virtualization tests)

- 7 TB each sharptail:/archives_backup and greentail:/archives (for static users files not in /home)

Software

There is an extensive list of software installed detailed at this location Software. Some highlights:

- Commercial software

- Matlab, Mathematica, Stata, SAS, Gaussian

- Open Source Examples

- Amber, Lammps, Omssa, Gromacs, Miriad, Rosetta, R/Rparallel

- Main Compilers

- Intel (icc/ifort), gcc

- OpenMPI mpicc

- MVApich2 mpicc

- Nvidia nvcc

- GPU enabled software

- Matlab, Mathematica

- Gromacs, Lammps, Amber, NAMD

Publications

A summary of articles that have used the HPCC (we need work on this!)

Expansion

Our main problem is that of flexibility. Our cores are fixed per node. One or many small memory jobs running on the Microway nodes idles large chunks of memory. To provide a more flexible environment, virtualization would be the solution. Create small, medium and large memory templates and then clone nodes from the templates as needed. Recycle the nodes when not needed anymore to free up resources. This would also enable us to serve up other operating systems if needed (Suse, Ubuntu, Windows).

Several options are available to explore:

These options would require sufficiently sized hardware that than logically can be presented as virtual nodes (with virtual CPU, virtual disk and virtual network on board).

Costs

Here is a rough listing of what costs the HPCC generates and who pays the bill. Acquisition costs have so far been covered by faculty grants and the Dell hardware/Energy savings project.

- Recurring

- Sysadmin, 0.5 FTE of a salaried employee, annual.

- Largest contributor dollar wise, ITS.

- Energy consumed in data center for power and cooling, annual.

- At peak performance 30.5 KwH for power is estimated, that translates to $32K/year.

- Add 50% for cooling energy needs (we're assuming efficient, green hardware)

- Total energy costs: at 75% performance $36K/year, Physical Plant.

- Software (Matlab, Mathematica, Stata, SAS)

- Renewals included in ITS/ACS software suite, annual.

- Hard to estimate, low dollar number.

- Miscellanea

- Intel Compiler, once in 3-4 years.

- Each time we buy new racks), $2,500, ITS/ACS.

- One year of extended HP hardware support.

- To cover period /home moving from greentail to sharptail, $2,200, ITS/TSS.

- Contributed funds to GPU HPC acquisition

- DLB Josh Boger, $5-6K

- QAC $2-3K

- Physics $1-2K